Using Visual Language Models to Control Bionic Hands: ICAT 2025

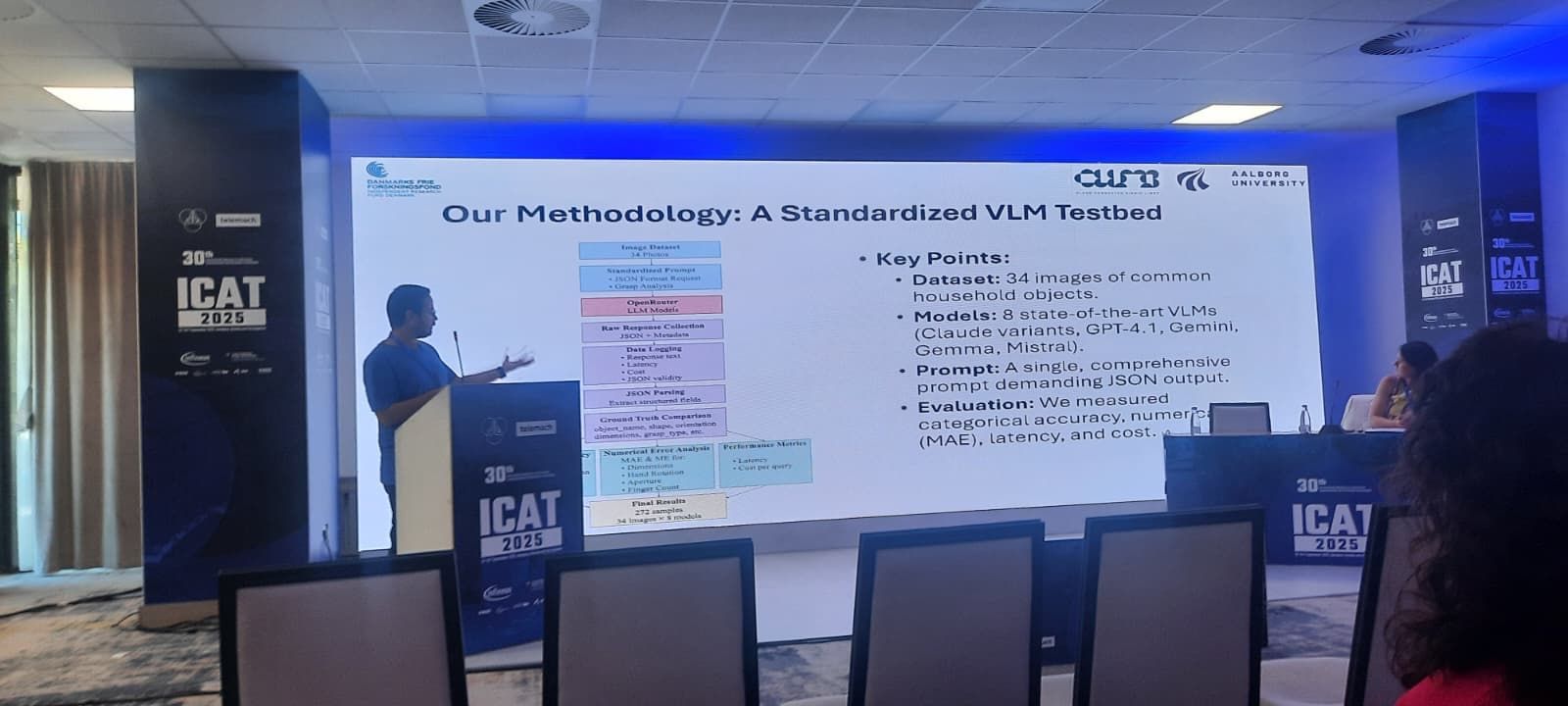

We investigate visual language models to enhance object perception and grasp inference for bionic hands. An onboard camera streams context to an edge model that returns interpretable grasp suggestions and rationales, aiding control strategies and user interaction.

- Authors: Ozan Karaali, Hossam Farag, Strahinja Dosen, Cedomir Stefanovic

- Venue: ICAT 2025 - https://icat.etf.unsa.ba/2025/index.html