ICAT 2025: Using Visual Language Models to Control Bionic Hands

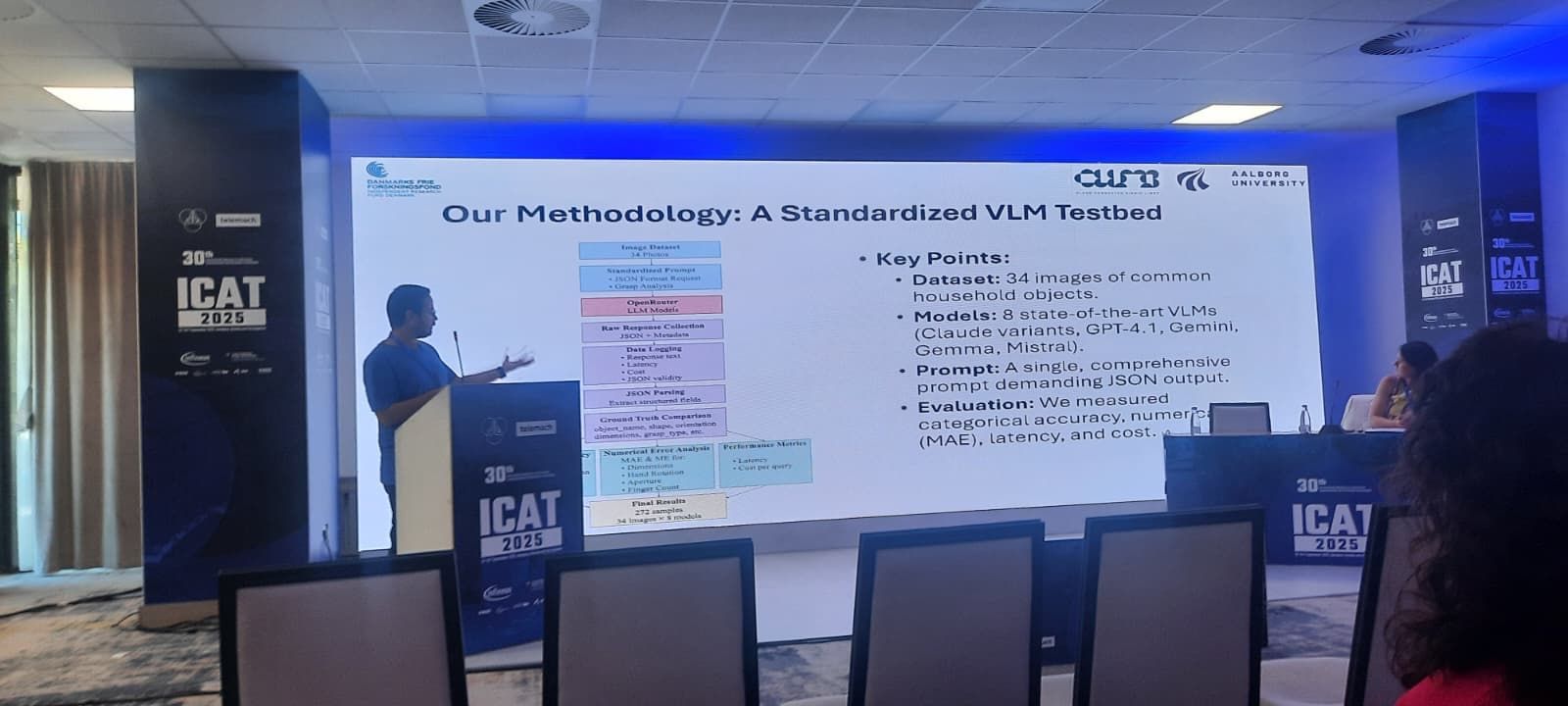

We presented our ongoing work at ICAT 2025 on leveraging visual-language models to improve object perception and grasp inference for bionic hands. The approach pairs an onboard camera with a lightweight client that streams context to an edge model, returning interpretable grasp suggestions. ...

read more